Introduction

LivePose offers a simple way to run computer vision models on live video input and send the results to any OSC-compatible application. Simply load a model, select your input feed, and start streaming data.

Quick start

- Launch LivePose and select your camera

- Load an

.onnxmodel - Configure your OSC output and start streaming

For more details, see Installation and Usage.

Installation

Prerequisites

- A supported platform: macOS, Linux (x86_64), or Windows

- A connected camera

Download

Pre-built packages are available upon signing up to toolbox.sat.qc.ca Download the artifact for your platform.

Models

Pre-built .onnx models are available from the model-storage release. Download the model(s) you want and point LivePose to them at launch.

Usage

LivePose lets you run computer vision models on live video and stream the results over OSC.

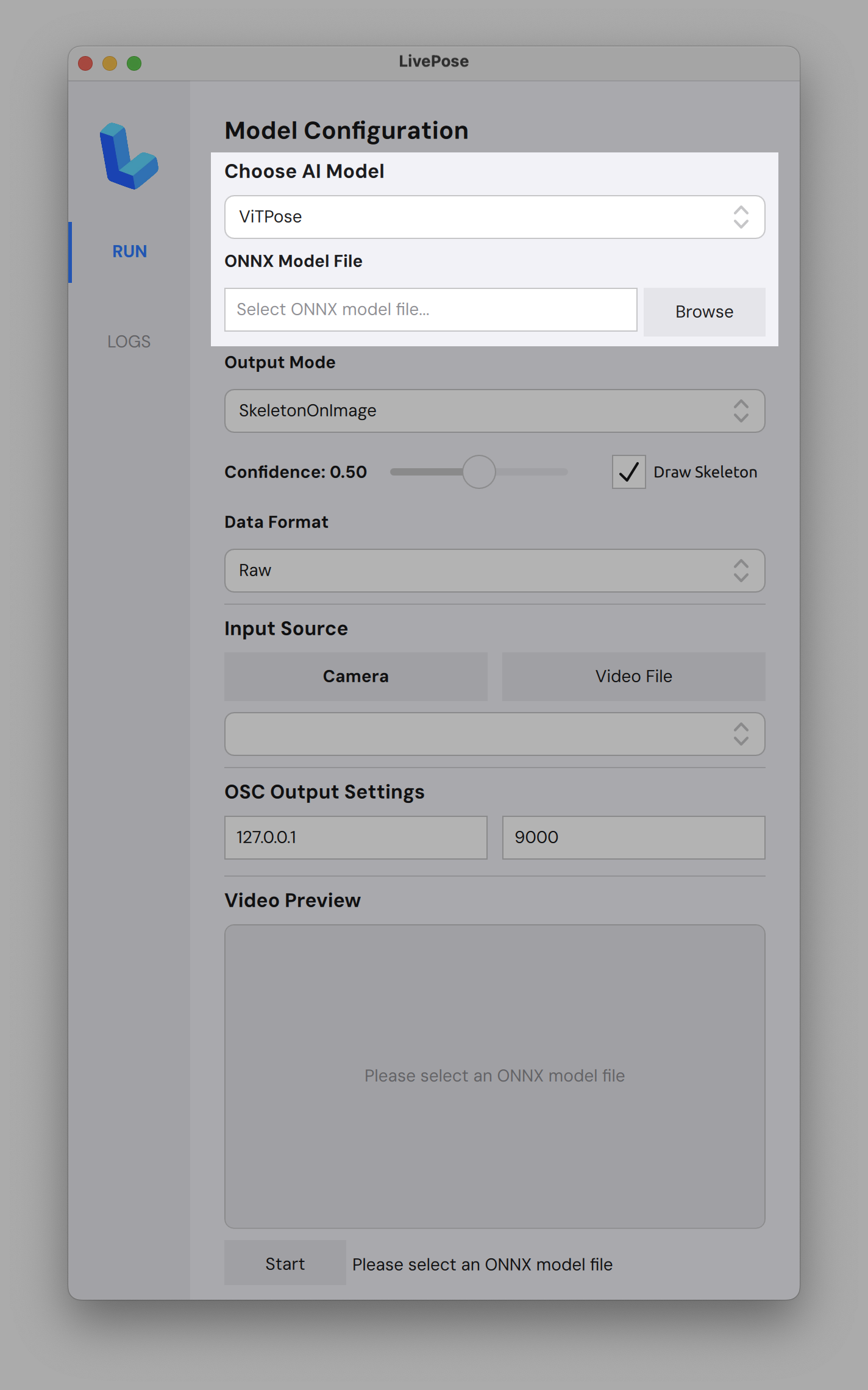

Loading a model

- Click Browse next to ONNX Model File and select an

.onnxfile - Models are available from the model-storage release

- Choose your AI Model (e.g. ViTPose) and Output Mode from the dropdowns

- Adjust the Confidence threshold and toggle Draw Skeleton as needed

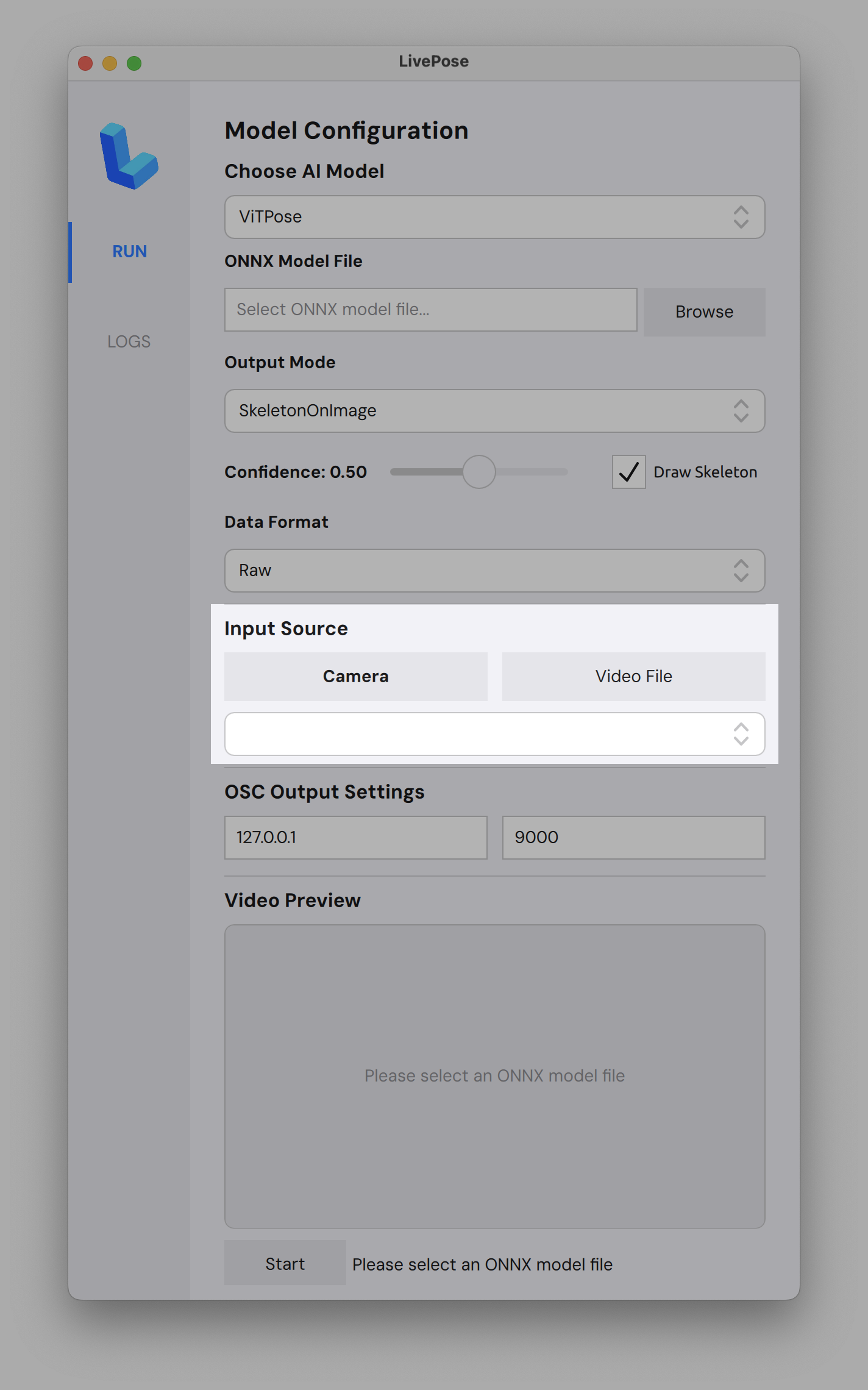

Camera

- Under Input Source, select Camera or Video File

- LivePose automatically detects connected cameras; pick yours from the dropdown

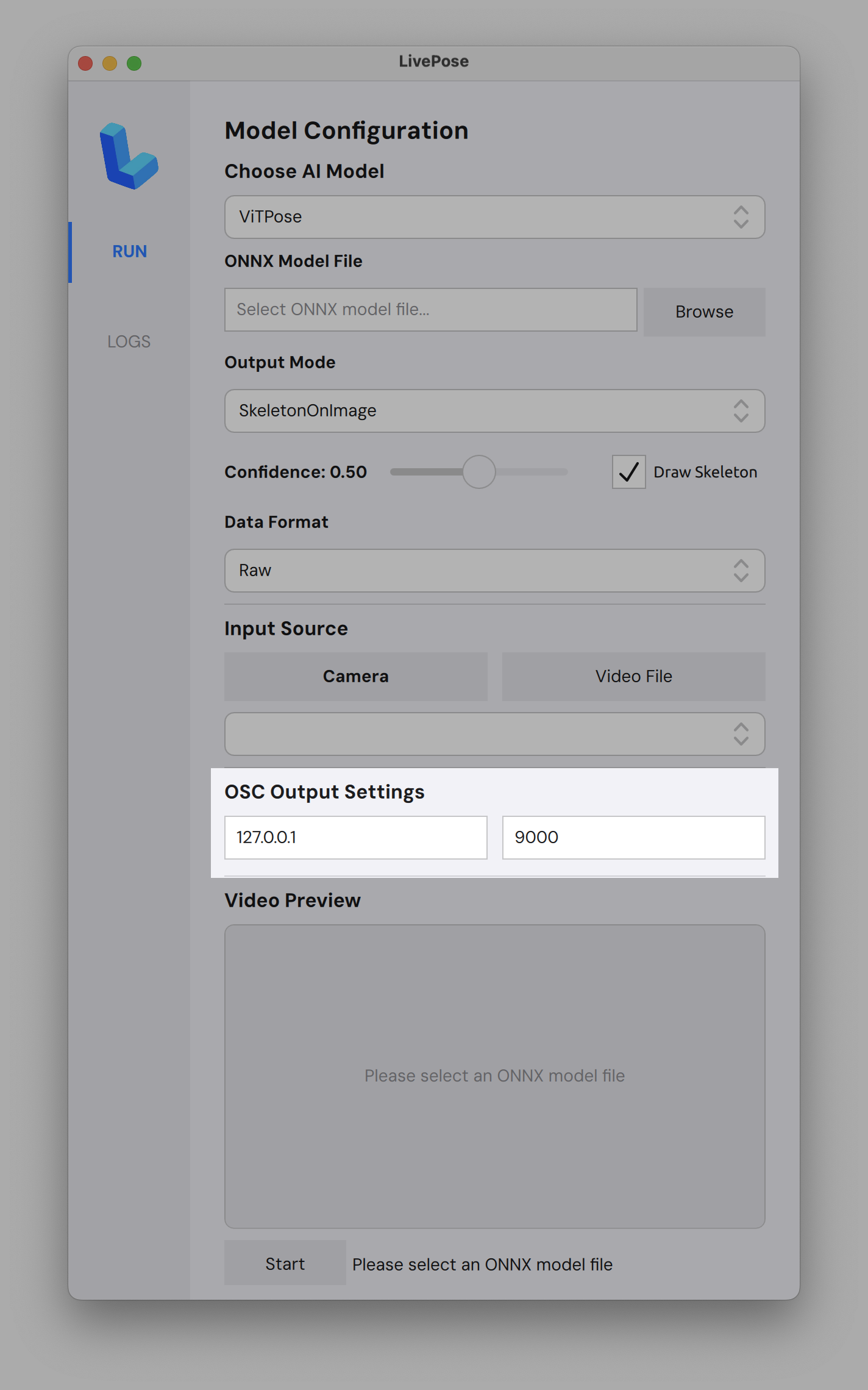

OSC output

- Set the IP and port for your OSC destination (default:

127.0.0.1:9000) - Hit Start; pose data will be sent in real time

- Use

oscdumpin your terminal for debugging

Use cases

Connect to Pure Data, Max/MSP, Processing, p5.js, TouchDesigner, SuperCollider, openFrameworks, or any OSC-compatible software. Examples coming soon!